Introduction: The End of Invisible Trust

For nearly three decades, the digital economy has thrived on a quiet but powerful assumption: interconnected systems can be trusted by default. This implicit trust enabled globalization at an unprecedented scale. Enterprises deployed mission-critical applications across continents, integrated platforms from dozens of vendors, and outsourced core infrastructure to global cloud providers without deeply interrogating the underlying control structures or jurisdictional implications. Solution architects, in turn, focused their efforts on performance optimization, scalability, latency reduction, cost efficiency, and seamless functional interoperability. Trust was treated as an ambient, background property-present, reliable, and rarely examined in any rigorous or explicit manner.

That era has now come to a decisive end.

The global digital landscape is undergoing a profound structural transformation. We are shifting from an age of effortless integration to one of deliberate fragmentation, from assumed global neutrality to assertive digital sovereignty, and from implicit trust to explicit, verifiable, continuously monitored, and dynamically defended trust. Geopolitical tensions, regulatory divergence across jurisdictions, rapid technological advancements-particularly in artificial intelligence-and the proliferation of synthetic data are converging to reshape how systems interact, how data flows, and how decisions are made at every level.

Across Asia-Pacific, Europe, the Middle East, and increasingly in Latin America and Africa, governments and large enterprises are actively reassessing their dependencies on foreign-controlled digital infrastructure. Policies mandating data localization, sovereign cloud environments, regional operational control, and even “sovereign AI” have gained significant momentum. In parallel, the explosive growth of AI-generated content and synthetic datasets has blurred the once-clear boundaries between authentic, observed reality and constructed, simulated information. This introduces entirely new dimensions of uncertainty into decision-making pipelines, risk models, and governance frameworks.

This convergence of forces forces a central, operational question that every solution architect must now confront head-on:

On what verifiable, enforceable basis should one system trust another in a fragmented, multipolar digital world?

This is no longer a philosophical debate or a mere compliance checkbox. It is a core architectural, operational, and strategic imperative. Traditional evaluation criteria-functional integration, performance benchmarks, cost models, and even basic security hygiene-remain necessary but are now profoundly insufficient on their own. Trust has emerged as a first-class, non-negotiable architectural concern. It must be designed into systems from the very outset, evaluated across every technical and non-technical layer, continuously monitored in real time, and dynamically recalibrated in response to evolving risks.

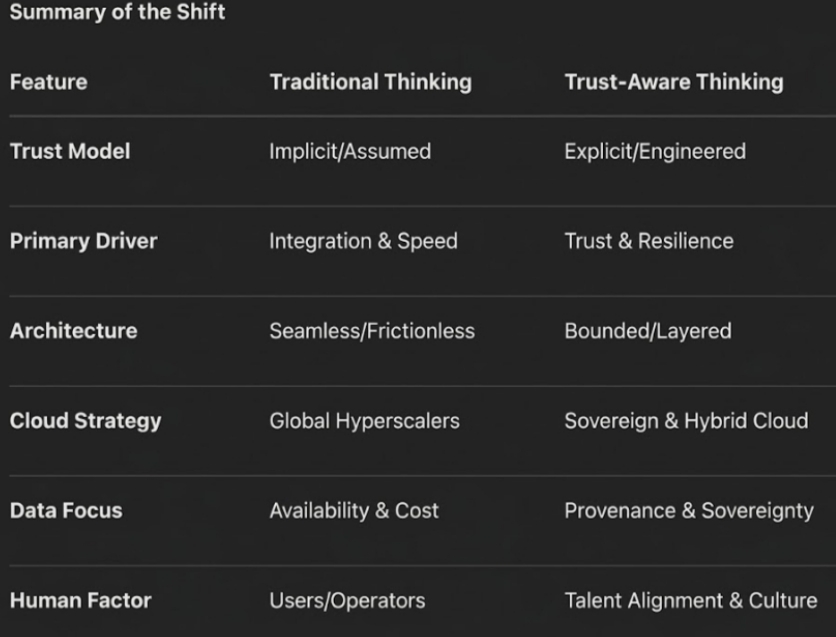

This fundamental shift heralds the rise of what I term the trust economy, where trust is no longer merely a social or relational construct but a quantifiable, engineerable, measurable, and governable attribute of digital architectures. As one CIO of a major Southeast Asian conglomerate confided to me during a 2025 strategy offsite, “In earlier architectures, trust was assumed and rarely questioned. In today’s architectures, trust must be explicitly engineered-and constantly defended against forces we cannot fully control.”

The implications for the solution architect’s role are nothing short of transformative. No longer primarily a system integrator or technical bridge-builder, the modern solution architect evolves into a trust orchestrator. This new mandate involves not only enabling secure and efficient connectivity but also defining the precise conditions under which connectivity is permissible, establishing verifiable boundaries at every layer, and anticipating risks that span technical vulnerabilities, jurisdictional conflicts, regulatory shifts, and geopolitical shocks.

In the sections that follow, I draw on my observations from more than two dozen enterprise engagements across Asia, Europe, and the Middle East between 2023 and 2026 to explore this paradigm shift in depth. We move from traditional integration-first thinking to a trust-first architectural mindset. I introduce a practical five-dimensional Trust Economy Framework that has proven effective in real client transformations. We examine infrastructure challenges with expanded, live case narratives-including recent sovereign cloud shifts in Asia-Pacific and the March 2026 drone strikes on AWS facilities in the UAE and Bahrain. We address the synthetic reality challenges posed by AI-generated data, detail the often-overlooked process and talent dimensions, outline evolved due diligence practices (what I call Due Diligence 2.0), discuss inevitable trade-offs, and conclude with a forward-looking view on programmable trust. The analysis is grounded in observed enterprise behaviors, recent geopolitical developments, architectural lessons from the field, and credible third-party research.

Consider a recent dialogue I facilitated in a boardroom in Singapore in late 2025. The CFO turned to the CTO and asked pointedly: “We have spent millions on a global cloud migration that delivered 40% cost savings and lightning-fast analytics. But if a single geopolitical event or regulatory change can cut off access to our core customer data overnight, have we truly built a resilient business-or just a faster one?” The room fell silent. That question captures the new reality architects must navigate.

From System Design to Trust Design

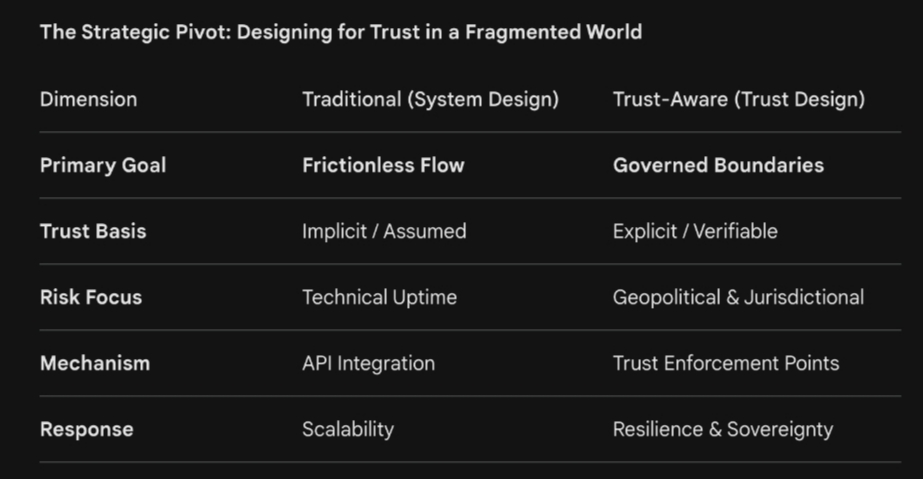

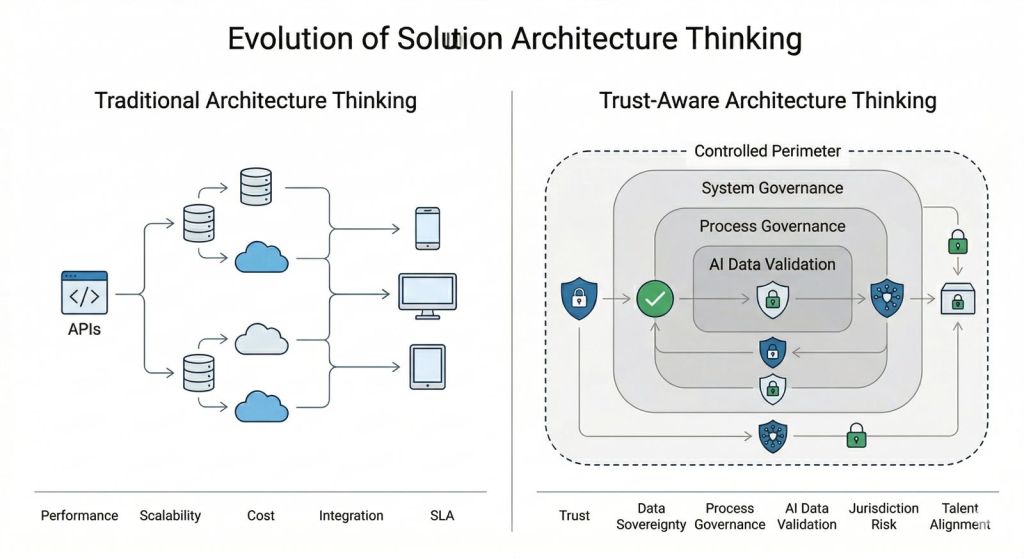

Traditional solution architecture was dominated by integration-first logic. The primary goal was to minimize friction: connect disparate systems via robust APIs, middleware layers, enterprise service buses, and modern service-oriented or microservices architectures to create seamless, frictionless digital experiences. Interoperability standards, loose coupling principles, elastic scaling, and container orchestration dominated design discussions. Trust mechanisms-such as basic authentication protocols, SSL/TLS encryption, and static role-based access control-were often treated as necessary hygiene factors or bolted-on security layers rather than foundational design elements integral to the architecture itself.

In today’s fragmented world, this integration-first approach has become a significant strategic liability. Unrestricted or poorly bounded integration exposes organizations to jurisdictional conflicts, unauthorized data exfiltration, strategic vendor lock-in or dependency risks, supply-chain compromises, and cascading failures when geopolitical or regulatory shocks materialize without warning.

The necessary paradigm shift is therefore clear and urgent: from Integration-First to Trust-First Architecture.

In trust-first designs, connectivity is never automatic, unconditional, or assumed. Every proposed interaction-whether between internal microservices, external SaaS platforms, cross-border data pipelines, third-party AI inference endpoints, or hybrid cloud workloads-must explicitly answer three foundational questions at design time and runtime:

Can this system (or component) be trusted? Evaluated against verifiable attributes including provenance, current security posture, compliance certifications, behavioural history, and supply-chain integrity.

To what extent can it be trusted? Trust exists on a spectrum, not as a binary state. For example, a system might receive read-only access for non-sensitive metadata but be denied full transactional privileges for regulated personal data.

Under what precise conditions should that trust be granted, maintained, or revoked? Conditions tied to real-time context (geographic location, time of day, threat intelligence signals), evolving policy (regulatory changes or sanctions), observed behaviour (anomaly detection via AI), or external events (geopolitical alerts or conflict indicators).

This mindset leads directly to trust-aware architecture, where trust mechanisms are embedded natively into the design fabric rather than retrofitted as an afterthought. APIs evolve from simple data-exchange endpoints into sophisticated trust enforcement points that incorporate dynamic authentication, contextual authorization engines, real-time risk scoring models, automated revocation logic, and cryptographic attestation of compliance. Data pipelines now mandate end-to-end provenance tracking, cryptographic validation of data origins, integrity checks against synthetic contamination, and automated bias-detection gates. Access control models shift decisively from static role-based systems to dynamic, attribute-based, or policy-as-code frameworks that adapt instantaneously to risk signals drawn from integrated threat intelligence feeds, behavioural analytics platforms, or regulatory update streams.

A vivid, real-world illustration comes from a large cross-border digital transformation program I observed firsthand in 2024–2025 involving a multinational financial services group with operations spanning Southeast Asia and Europe. Technically, the core banking platform integration performed flawlessly during load testing-sub-50ms latency, 99.999% uptime, and seamless automated failover. Post-deployment regulatory audits, however, uncovered critical vulnerabilities rooted not in code but in ambiguous ownership of data assets across jurisdictions and inconsistent enforcement of access controls. One regional subsystem retained elevated administrative privileges that directly violated emerging data localization rules in a key ASEAN market. The architecture “worked” from a functional and performance standpoint, but the underlying trust model collapsed under regulatory scrutiny. The result: a six-month delay in go-live, millions in rework costs, temporary service disruptions for high-net-worth clients, and a sharp drop in internal confidence. As the program lead architect later reflected in a debrief I attended, “We optimized for speed and scale, but we never designed for trust. That was the real failure.”

This case underscores a deeper, recurring insight I have seen repeated across engagements: systems can execute correctly according to functional specifications while remaining fundamentally untrustworthy from governance, risk, regulatory, or strategic perspectives. Solution architects must therefore expand their professional scope and accountability to explicitly include:

- Mapping and enforcing trust boundaries at every integration layer-network, application, data, identity, and even AI model layers.

- Designing verification mechanisms such as continuous attestation protocols, cryptographic proofs of compliance, immutable audit trails using blockchain-inspired ledgers, and automated compliance scoring engines.

- Anticipating and modelling multi-dimensional risks ; technical (zero-day vulnerabilities), jurisdictional (e.g., implications of the U.S. CLOUD Act), regulatory (GDPR vs. local data sovereignty laws), and geopolitical (sanctions, trade wars, or physical infrastructure attacks).

Established Zero Trust principles, as formalized in NIST SP 800-207 (2020) and updated in subsequent industry guidance, provide a strong foundational reference. However, they must now be extended far beyond traditional network perimeters to encompass data provenance, AI model integrity, cloud jurisdictional control, process governance, and human decision-making factors.

The Trust Economy Framework

To make trust actionable, measurable, and scalable across large enterprises, I propose a practical five-dimensional model that has guided several successful transformations I have advised:

Trust = f(Systems + Cloud/Infrastructure + Data + Process + Talent)

Trustworthiness emerges as an emergent property from the harmonious alignment-or is fatally undermined by misalignment-across these five interdependent dimensions. A failure in any single dimension can cascade rapidly and compromise the entire ecosystem, often in ways that are invisible until a crisis strikes.

The below figure illustrates the fundamental transition from Integration-First to Trust-First architecture, where the priority shifts from frictionless connectivity to governed boundaries. It signifies a move away from implicit, assumed trust toward explicit and verifiable mechanisms that justify system interactions at both design and runtime. By expanding risk focus beyond technical uptime to include geopolitical and jurisdictional factors, the framework enables architects to build for long-term resilience and sovereignty. Ultimately, this shift redefines the solution architect’s role as a trust orchestrator capable of navigating a fragmented and multipolar digital world.

Systems & Platforms

Modern enterprises operate in complex, heterogeneous ecosystems comprising legacy ERP systems, SaaS applications, custom-developed microservices, third-party APIs, open-source libraries, and increasingly autonomous AI-driven components. Dependency opacity is a pervasive risk: architects frequently lack full visibility into nested sub-components, transitive vendor relationships, or hidden supply-chain elements. Hidden risks-such as compromised libraries or unvetted AI models-can introduce backdoors, bias amplification, or sudden unavailability. Mitigation requires comprehensive software bills of materials (SBOM), runtime attestation, regular dependency trust scoring, and automated vulnerability scanning tied to trust revocation policies.

Cloud & Infrastructure

Infrastructure ultimately determines physical, operational, and legal control over data residency, access rights, and applicable legal frameworks. Global hyperscalers deliver unmatched scale and innovation but introduce significant extraterritorial risks, as highlighted by the U.S. CLOUD Act of 2018, which permits government access to data under a provider’s control regardless of storage location. Enterprises must evaluate not only technical SLAs and uptime guarantees but also jurisdictional exposure and geopolitical resilience.

Data

Data remains the lifeblood of decision-making. In the AI era, the fundamental shift from purely observed real-world data to increasingly AI-generated synthetic data introduces new risks of bias amplification, provenance erosion, and “model collapse” where repeated training on synthetic outputs degrades overall quality and diversity. Architects must enforce strict data lineage tracking, multi-stage validation pipelines, and explainability layers.

Process

Processes operationalize trust through clearly defined, enforceable workflows for access provisioning, change management, incident response, auditing, and continuous improvement. Weak or inconsistently followed processes remain the root cause of the majority of high-profile breaches and operational failures, even in technically sophisticated environments.

Talent & Culture

The human dimension-design decisions, day-to-day operational execution, incentive structures, and cultural interpretations of accountability and risk-profoundly influences overall trust. Global, distributed teams introduce both diversity advantages and potential misalignments in risk tolerance, escalation norms, and governance maturity.

As I often remind clients, “Trust is not resident in any single layer. It is an emergent property arising from deliberate, ongoing alignment across systems, infrastructure, data, processes, and people.”

Infrastructure Trust: Clouds, Sovereignty, and Strategic Dependency

Cloud computing has been one of the most transformative developments in modern technology, delivering elasticity, global reach, and cost efficiencies once unimaginable. Yet its highly concentrated, often foreign-headquartered nature is now being rigorously re-evaluated in light of escalating geopolitical realities.

The Hyperscaler Question

Many organizations continue to rely heavily on U.S.-headquartered hyperscalers (AWS, Microsoft Azure, Google Cloud) for their unparalleled capabilities in compute, storage, AI services, and global networking. However, strategic and sovereign concerns now dominate board-level discussions: potential subjection to extraterritorial laws such as the CLOUD Act, risks of sudden data access or service disruption during conflicts or sanctions, and loss of control over truly critical national or enterprise data.

Expanded Case Study: Asian Conglomerates and the Sovereign Cloud Pivot

Several large conglomerates, banks, and government-linked entities in the Asia-Pacific region have accelerated their reassessment of hyperscaler dependency since 2024. In India, the Digital Personal Data Protection Act combined with RBI data localization mandates for payment systems have pushed major banks and fintech players toward localized sovereign cloud deployments. Airtel’s indigenous cloud platform and the government’s MeghRaj initiative have built meaningful domestic capacity. Enterprises now adopt sophisticated hybrid models-leveraging global hyperscalers for non-sensitive, innovation-focused workloads while routing regulated or sensitive data to sovereign or private clouds.

In Malaysia, the government announced a RM2 billion sovereign AI cloud initiative in 2025 to support national digital ambitions while maintaining control. Indonesia’s Palapa Ring project continues to underpin distributed sovereign infrastructure. Japan and South Korea have leaned heavily on domestic providers such as NTT, Fujitsu, NHN Cloud, and KT Cloud, all backed by stringent national security certifications. A December 2025 IISS research paper highlights how Japan, the Philippines, Singapore, and Thailand are explicitly adopting cloud technologies for national-security and defence purposes, balancing innovation with sovereignty. Gartner and industry forecasts indicate that by 2027, 75% of Asia-Pacific organizations will modernize legacy cloud environments as AI workloads drive further shifts toward sovereign and localized infrastructure.

Disaster Recovery Reimagined in a Geopolitical Age

Traditional disaster recovery (DR) strategies emphasized geographic redundancy and cost optimization-placing backup sites as far apart as possible while minimizing expense. In the trust economy, priorities have shifted dramatically toward jurisdictional redundancy and near-DR within trusted legal spheres. Organizations now weigh “trusted jurisdiction distance” alongside physical distance, favoring DR setups that maintain legal independence and operational control even if the sites are geographically closer. “We no longer ask ‘How far is the backup site?’ but ‘Under whose laws does it operate, and can we still access it if relations sour?’” remarked a head of infrastructure at a Thai conglomerate during a 2025 review I led.

The Rise of Dual (and Multi) Systems-and the March 2026 Wake-Up Call

To hedge against single points of failure, enterprises increasingly deploy parallel systems across different providers and jurisdictions. Benefits include resilience, compliance flexibility, and reduced strategic dependency; drawbacks include higher costs (often 30-50% premium), synchronization complexity, data consistency challenges, and integration overhead. Board-level conversations have shifted noticeably: from “Can we afford the cost of redundancy?” to “Can we afford the existential strategic risk of single-jurisdiction dependency?”

The urgency of this shift was dramatically illustrated in March 2026 when Iranian drone strikes damaged three Amazon Web Services data centers in the United Arab Emirates and Bahrain amid escalating Middle East conflict. Amazon confirmed structural damage, power disruptions, and service outages affecting regional customers. Reuters, CRN, and cybersecurity analysts described the incidents as the first known military strikes on commercial hyperscale cloud infrastructure, highlighting how data centers have become strategic targets in modern hybrid warfare. One affected enterprise CIO told me in a follow-up call: “We had DR plans. None of them assumed our primary cloud provider’s facilities would be physically bombed. That changed everything.”

Europe’s GAIA-X initiative continues to exemplify the push for federated, interoperable sovereign infrastructure. The November 2025 launch of the “Danube” architecture release at the Gaia-X Summit in Porto marked a major step forward in automated compliance, cross-ecosystem interoperability, and reduced non-European dependencies. Gartner forecasts continued strong growth in sovereign cloud IaaS spending, particularly in Mature Asia/Pacific and Europe.

Data & AI Trust: The Synthetic Reality Challenge

The rise of artificial intelligence has fundamentally altered the nature of data itself-from primarily observed and collected from real-world events to increasingly generated, simulated, and augmented by models.

From Observed Data to Generated (and Synthetic) Data

Historically, data carried inherent, if imperfect, grounding in observable reality. Verification occurred through cross-referencing with physical events or multiple sources. Today, data can be entirely generated by AI models for training, augmentation, privacy preservation, or scenario simulation. Synthetic data promises scalability and privacy benefits but carries risks of amplifying hidden biases, introducing subtle inaccuracies, and obscuring provenance.

Real-World Risks and Expanded Case Studies

Synthetic data does not exist in a vacuum. When source datasets contain biases, synthetic outputs tend to replicate and often amplify them. A 2025 scoping review in healthcare AI highlighted how synthetic tabular data generated from small, non-diverse samples can dangerously assume population homogeneity and mislead clinical models. Classic examples persist and evolve:

- The 2019 healthcare cost algorithm (used for ~200 million patients) used costs as a proxy for need and systematically underestimated requirements for Black patients due to historical access disparities. Recalibration using direct health measures dramatically improved equity.

- COMPAS recidivism tool showed racial bias with higher false-positive rates for Black offenders.

- Amazon’s internal hiring algorithm, trained on historical male-dominated resumes, penalized female applicants by downgrading resumes containing “women’s” indicators.

Recent 2025 studies on synthetic data in medical AI warn of “synthetic trust”-an unwarranted confidence in outputs that may propagate biases or lose clinically significant variation. One researcher noted: “Generating 1,000 synthetic patient records from only ten individuals from an underrepresented group assumes homogeneity that simply does not exist in reality.”

Architectural Responses for Trustworthy AI

Solution architects must implement end-to-end data lineage tracking (leveraging standards such as W3C PROV), multi-stage validation pipelines with human-in-the-loop oversight for high-stakes decisions, bias-detection tooling, and explainability techniques (SHAP, LIME). Trust in AI systems is ultimately not about raw accuracy alone-it is about traceability, accountability, verifiable fairness, and the ability to audit decisions back to their data origins.

The above figure illustrates a fundamental shift in the mindset of a Solution Architect. It highlights that while traditional systems focused on performance and seamless connectivity through global Hyperscalers, modern architectures must prioritize verifiable trust and jurisdictional sovereignty. By shifting from implicit assumptions to explicit engineering, architects move beyond purely technical uptime to manage geopolitical risk and data provenance. Ultimately, this summary defines the new “Trust Economy” mandate: ensuring every connection is secure, compliant, and culturally aligned by design.

Process as the Core Engine of Trust

Process remains the most critical-and most frequently overlooked-dimension of trust. Most system failures and breaches I have investigated trace not to lack of technical capability or clever exploits but to weak, inconsistently applied, or poorly governed processes.

Why Technically Sound Systems Still Fail

High-profile incidents repeatedly demonstrate that even robust platforms collapse when processes for access review, change approval, or incident escalation are inadequate.

Access Control and Governance Case Insights

Zero Trust adoption is accelerating rapidly. Gartner predicts that by 2028, 50% of organizations will implement zero-trust postures specifically for data governance in response to unverified AI-generated data. Over 70% of organizations plan full Zero Trust implementations by 2026.

The Process Trust Loop

I recommend a closed-loop model: Define → Execute → Monitor → Audit → Adapt. This loop, embedded in policy-as-code tools, ensures trust remains dynamic.

Talent & Cultural Trust

Global talent models bring diversity and innovation but also introduce alignment challenges across cultures, regulatory contexts, and incentive structures. Architects must evaluate not only technical skills but cultural fit, governance maturity, and risk interpretation when designing cross-border solutions.

A dialogue I moderated in Dubai in early 2026 captured this: “Our Indian team interprets ‘urgent escalation’ differently from our European compliance leads,” said one regional CTO. “That cultural delta almost caused a regulatory violation last quarter.”

Due Diligence 2.0: From Static Checklists to Continuous Trust Monitoring

Due diligence must evolve from one-time vendor questionnaires to continuous, automated evaluation incorporating jurisdiction risk scoring, data control maturity, process robustness, and talent governance metrics. Tools now include AI-driven compliance dashboards and real-time threat intelligence integration.

The Evolving Role of the Solution Architect

Today’s architect is simultaneously trust orchestrator, risk navigator, governance enabler, and strategic advisor-balancing innovation velocity with sovereignty and resilience requirements.

Trade-offs and Practical Considerations

Trust investments inevitably involve trade-offs: higher costs for redundancy and sovereignty versus operational efficiency; slower innovation cycles due to rigorous verification versus speed-to-market; global scale versus localized control. Successful organizations treat these as deliberate strategic portfolio decisions rather than pure technical optimizations.

The Future: Programmable Trust

Emerging technologies-policy-as-code engines, decentralized identity systems, cryptographic attestations, AI-driven risk engines, and federated learning-point toward dynamic, computable, context-aware trust that can be programmed, audited in real time, and adapted at enterprise scale.

Conclusion : Trust as the Defining Architecture of the Next Digital Era

In a fragmented world, the competitive advantage shifts decisively from the most connected systems to the most trusted ones. Solution architects who master trust orchestration will define the resilient, sovereign, and ethical digital infrastructure of the future.

The evolution from system design to trust design is not a temporary adjustment – it is a structural shift that will define the next phase of the digital economy. What began as a response to geopolitical uncertainty, regulatory divergence, and the rise of artificial intelligence is now solidifying into a new architectural doctrine: systems must not only perform, they must justify the basis on which they are trusted.

For solution architects and master system integrators, this represents both a challenge and an opportunity. The challenge lies in navigating a world where the variables influencing architecture are no longer purely technical. Control is fragmented, data is increasingly synthetic, processes are under constant scrutiny, and talent operates across diverse cultural and regulatory contexts. The opportunity, however, lies in redefining the role of architecture itself-from enabling connectivity to governing trust at scale.

The most important realization is that trust cannot be delegated. It cannot be outsourced to a cloud provider, assumed through a compliance certificate, or inferred from historical reliability. Trust must be continuously earned through design, enforced through process, and validated through evidence. This demands a shift from static architectures to adaptive ones-systems that can assess context, interpret risk, and adjust behaviour dynamically.

In practical terms, this means architects must begin to think in terms of trust boundaries rather than system boundaries, verification layers rather than integration layers, and resilience models that account not only for technical failure, but for legal, geopolitical, and epistemic uncertainty. The emergence of dual systems, sovereign cloud strategies, jurisdiction-aware disaster recovery, and AI validation pipelines are not inefficiencies-they are early indicators of a more mature, trust-centric design philosophy.

Equally, the elevation of process and talent within the trust equation signals a broader truth: technology alone cannot guarantee trust. It is the interplay between systems, governance, and human judgment that ultimately determines whether an architecture is robust or fragile. Organizations that fail to align these dimensions may achieve short-term efficiency but will struggle to maintain long-term resilience.

Looking ahead, trust will become increasingly programmable, measurable, and auditable. Advances in policy-as-code, decentralized identity, cryptographic attestation, and AI-driven risk modelling will enable systems to make trust decisions in real time. Yet, even as these capabilities mature, the responsibility for defining what constitutes “trustworthy” will remain deeply human-shaped by values, context, and strategic intent.

Ultimately, the competitive advantage in the trust economy will not belong to those who build the fastest or most integrated systems, but to those who build systems that others are willing and able – to trust under conditions of uncertainty.

“In the past, we built systems to scale across the world. In the future, we will build systems that understand the world well enough to decide when not to trust it.”

This above visual captures the decisive transition from Integration-First logic to the Trust-First architectural mindset necessitated by a fragmented, multipolar digital world. On the left, traditional thinking treats trust as an “invisible, ambient property,” focusing exclusively on minimizing friction to achieve performance and scale. In stark contrast, the right side illustrates the rise of the Trust Orchestrator, where connectivity is never automatic but instead explicitly engineered through a “Trust Economy Framework”. This trust-aware architecture embeds layers of system governance, data validation, and jurisdictional safeguards directly into the design fabric to ensure that every interaction – whether human, cloud, or AI-generated-is verifiable, enforceable, and resilient against geopolitical and regulatory shocks.

References

1. NIST SP 800-207 (2020). Zero Trust Architecture.

2. Reuters (March 2026). Amazon confirms drone strikes damage AWS facilities in UAE and Bahrain.

3. IISS (December 2025). Cloud Adoption for National-Security and Defence Purposes: Four Case Studies from the Asia-Pacific.

4. Gartner (2026). Predicts on Zero Trust Data Governance.

5. Gaia-X Association (2025). Danube Release and Summit Updates.

6. Various 2025 studies on synthetic data risks in healthcare AI (The Lancet Digital Health, etc.).

Leave a comment